About the guest

The Future of PLM expert panel discusses how product memory architecture supports AI agent autonomy in engineering and manufacturing workflows.

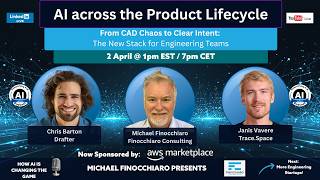

Episode summary

The Future of PLM panel explores product memory as the critical missing layer that bridges PLM systems, digital threads, and AI agents—enabling autonomous systems to understand product context and make intelligent decisions throughout the product lifecycle.

Key takeaways

- →Product memory is the contextual layer AI agents need to understand product history and decisions

- →Digital thread alone is insufficient without semantic product memory

- →Product memory must integrate design intent, manufacturing decisions, and operational feedback

- →AI agents require queryable product memory to make autonomous decisions

- →Legacy PLM systems lack the graph-based structures needed for product memory

Topics discussed

Episode Summary

Oleg Shilovitsky (OpenBOM) introduces the concept of product memory as a critical missing layer that sits between PLM systems, digital threads, and AI agents. This panel discussion explores how organizations can capture decision context, manage semantic consistency across multiple systems of record (PLM, ERP, MES, PIM, e-commerce), and enable AI agents to reason about products with full historical and contextual information.

The panelists discuss the practical challenges of data governance, ontology management, and human readiness for product memory adoption. Key themes include the importance of capturing not just decisions, but the reasoning behind those decisions; the role of semantic consistency (meta-layer governance) in preventing data corruption as information flows across systems; and the fundamental tension between automating data capture while still protecting employee knowledge and IP rights.

Product memory, as discussed, is not a replacement for PLM systems, but rather a next-level abstraction that allows organizations to build better AI agents and gain insights from the synthesis of information across traditionally siloed systems.

Full Transcript

Michael Finocchiaro (00:16)

And we're live. This is Michael Finocchiaro coming to you once again for the Future of PLM webcast with special guest — Rob McAveney CTO of Aras and hopefully David Segal from TCS will be joining us as well. — Today, we're going to be talking about this problem where — for decades, PLM and manufacturing systems have been chasing this ever-escaping single source of truth idea. And — we're wondering whether that's...

If that's definitely necessary, but is it enough when you've got multiple systems, — the digital thread gets broken because things move on? And it often doesn't even give us the reason for decisions, the design intent, the assumptions that were ejected and so forth. so — debatably, Oleg was the first to discuss this online, although Benedict Smith and many others would say that they were the first ones to talk about this. —

Oleg, I will say first publicly blogged about the idea of a product memory. So we decided on our last call, which was about eBOM and inBOM to have a special call about product memory. And so I've joined five experts here. Hopefully again, David will be joining. I have Oleg from OpenBOM, — which is already a system based on a graph. And he's going to be talking about coordination and distributed product data and so forth.

Rob McAveney, the CTO of Aras, who mentioned product data in his keynote, which was sort of the spark to this conversation. Brion Carroll, who's also written on the subject, and I'm sure he'll put a link to his article in the notes and comments. David Segal, if he arrives, runs a business at Tata Consulting Services around enterprise architecture and IT conversions, and hopefully he'll be joining us. And lastly, but not least, even if he doesn't have the bowtie today,

Brion Carroll (01:47)

Ha ha.

Michael Finocchiaro (02:10)

Jonathan Scott of Razor Leaf, who has been around the industry more or less forever like everybody else here. and a word to our sponsor, we are sponsored by AWS, who would like you to click on the link for a white paper, which will be down in the comments below. without any further ado, let's define product memory. So Oleg, you were the first one allegedly to come up with this phrase, which might be something we've always been talking about and thinking about. just didn't.

Jonathan Scott (02:10)

Sorry.

Brion Carroll (02:17)

Ha ha ha ha.

Rob McAveney (02:18)

No.

Michael Finocchiaro (02:39)

give it a name. So would you like to, I mean, and you've argued for a long time that a lot of product work happens outside of the traditional PLM workflow, emails and spreadsheet of messages and supplier exchanges. — So how did you come up with a phrase and — what should that memory capture that PLM systems today do not?

Oleg Shilovitsky (03:02)

Yeah, thank you. Thank you, Michael. So it was an intersection of two parallel observations. One of them is what I observed in PLM industry is happening.

is that we've been talking about single source of truth and how to organize the single source of truth. And some companies said we will put everything in the single database and call it single source of truth. And then we realized the problem, we cannot do it. And then the next step is of our single source of truth and evolution we gave to the digital thread and how it's everything can be connected. So that was the one path. And then in parallel with the old...

all AI technologies and everything that we observed came to conclusion that the memory is really important for agents and for AI, not like to get the right context. So I think those were two parallel threads. And then — the realization that we live a lot outside of formal systems in a different places and the different threads and the different conversations and different things.

brought me to conclusion that we can create a single source of truth in different system of records. We can connect them with the digital thread, but still something is missing. And it's something is not a replacement of everything that we had before, it's just the next level. And so the next level, somehow I came to the realization it's a memory of everything.

because it's a life cycle and memory of everything. So that belongs to product because everything we develop is product. So I think that was the realization. I I certainly believe like this is not a proprietary name for sure. I was looking for something that resonates with the understanding and people. And that's why I started to share those thoughts. And I think that's probably the simplest pitch that I have. So it's a layer.

Brion Carroll (04:46)

Ha ha ha ha.

Oleg Shilovitsky (04:59)

that — keeps contextual information and allows to people to go back and reason on top of this information. So a lot of ideas what can be done with this, that's what it is from my perspective today.

and you're muted.

Brion Carroll (05:21)

You are new.

Okay.

Michael Finocchiaro (05:26)

Well, some people

are complaining that they hear me himming and highing and getting bored listening to you guys. So I was supposed to mute myself. So yeah, that's pretty bad. — Rob, it, it's two weeks ago, which is the big, — heiress, global, — global, well, at least the U S version of the global meeting. — you had a really awesome keynote. think globally everybody.

Brion Carroll (05:28)

Ha ha.

Oleg Shilovitsky (05:31)

You

Brion Carroll (05:34)

It's the snoring, the snoring, that's what gets me.

Michael Finocchiaro (05:52)

Just use it. Everybody's response to it was really positive. I wrote about it, but I think Oleg Jonathan Vazir wrote about it. — You talked about product memory, and you've been talking about adaptive PLM and the importance of dependency graphs for a while, that traditional PLMs could tell us what part A is in assembly B or change Y is affected by item — X, but they often don't expose the richer dependency.

structure that — across requirements, supplier and cost. So why are these dependency becoming as important as the records themselves?

Rob McAveney (06:28)

Yeah, so it should be clear, I think that there's a lot — of context that's buried in — unstructured data — that is often attached to the digital thread as opposed to a native part of the digital thread. So consider a Word document or an Excel file that contains references to part numbers or to — quality plans, for example, — that have all sorts of...

knowledge — embedded in — these unstructured documents. But that knowledge is not exposed as records in a database. It is exposed within an unstructured — content. And so what a dependency graph does is starts to pick up references. So for example, if I had a product catalog that preferences model numbers, there might be a thousand model numbers inside a product catalog.

But those don't have any real link back to the definition of what that model represents in terms of its fill material, in terms of where it's produced. It's, know, eBOM, mBOM, all that good stuff. And so the dependency graph is really this idea of a layer on top of what we consider a traditional digital thread to be able to provide that additional context. And then if we extend that to — the product memory — concept,

It is providing additional — context, — hopefully brought to where the user is actually making decisions, — bring that additional context into the mix such that we get the full scope of what the user or users were seeing at the time they made the decision, at the time we're — collecting that decision trace to add to the — context graph and the broader digital thread.

Michael Finocchiaro (08:25)

Awesome. Well, that's really clear. Rob. I think, — Brion, you've also written on this. Do have anything to add to what — the definitions that Rob and Oleg have already given us?

Brion Carroll (08:36)

No, think the key is you can have silo systems. Let's say we cherry pick assortment planning, PLM, ERP, PIM, and e-commerce, right? And if those systems all have their core common data, this past or equivalent in the different locations or silo systems, they also have additional data. Like ERP will have information about shipping and...

a distribution and so on and so forth. An econ may have some further verbosity in regards to describing the product that isn't in PLM or anywhere else. That selected data, if pushed into product memory, because I see product memory as almost an orb of data that's being fed by all of these silo systems as they transact. And so the key here is making sure that those silo systems are, I call it intimated.

meaning intelligently integrated, not just posting out of CSV and sucking it in and hoping everything's right or whatever, or letting data come in and changing it and not sending it back. That'd be like, you know, that's tough. So that — consistency, you know, a digital solution group, call it digital fluidity from mind to market, right? Which is the content flowing. If you can have it so that it's posting selective data to product memory.

Kind of like what Oleg's saying in regards to the creative bomb, or Rob was saying in relationship to document segments or pieces of document content, pointing back to the documents themselves. So you're not burdening product memory with all of the repeated data. You're being selective. That is where — you have a perfect foundation for AI to then engage and begin to build out and pull out content that —

is clear if you don't create consistency as the data moves, I call it the last one in wins, right? Because whoever, if you've got like retail price and you've set it in assortment planning and you've reinforced it in — PLM by way of the cost model and the margin requirements, and then you send it to ERP and they go, thanks, whoosh. They downplay it 20 % because they want to push things out to econ or wherever. That last retail price becomes the retail price.

And a certain plan is going, happened to our 30 %? We don't even have a margin on this. And PLM's going, hey, wasn't me. So you got to make sure that data governance is intimate with all the content as it goes from silo to silo to silo, because that will be the content pushed into product memory.

Michael Finocchiaro (11:23)

— Jonathan had his hand up. So probably you wanted to react on that. I was also wondering how this can help companies understand the impact of these small changes, like this change that Brion was just talking about — and how that impacts the rest of the organization. ahead, Jonathan.

Jonathan Scott (11:39)

Yeah,

I think you do have to watch that. You have to be careful about how changes in the data affect the memory, right? And what point in time do you want the memory, right? Are you looking for the old stuff, the recent stuff, the whole picture, that kind of thing, right? So to that point about change, I think that is important. What I wanted to add onto this is it's something I see, know, pragmatically in implementations. It gets in the way. It's going to get in the way of this idea of product memory.

is we talk about building out digital threads for people to connect up all these pieces of information. And we absolutely need to do that. One of the things that gets in the way though is terminology, it's language, it's communication, right? It's a basic thing. What gets in the way is that I don't mean the same thing in engineering when I talk about a part as they do in manufacturing when they talk about a part, right? So I don't want to get to too many buzzwords and academic theories, but semantics matter, right?

David Segal (12:24)

it.

Jonathan Scott (12:39)

What do you mean by the thing that you say, the piece of data you're talking about? So when we start talking about putting it into product memory, we have to fix those things first. We have to all be talking about the same thing before we commit it to a collective memory, because otherwise nobody's going to understand, right? And AI is not going to understand us either. that's my take on when we think about product memory, what do we need to be doing to get there?

Michael Finocchiaro (13:04)

Thanks, Jonathan. — David, I'm glad you could join us. I'm sorry there was a bit of a link mix up there, but you're here. — David, you come from TCS, so you're dealing with customers, thousands of customers worldwide, implementing this stuff all the time. And I wanted to bring you in from that enterprise architecture side. Product memory sounds like a PLM idea, but if we're honest, it really spans a lot of other things that you're dealing with. ERP, MES, QMS, ALM.

David Segal (13:09)

technical difficulty.

Michael Finocchiaro (13:33)

suppliers and plants and operational systems. So for you, how does product memory fit in a broader enterprise architecture for AI and digital thread and from your TCS perspective, from your experience?

David Segal (13:46)

Sure, So from a global system integrator perspective or from a large enterprise system integration perspective, the landscape of multi PLM systems, multi RP systems is given. And it's not about standardizing on one particular technology or orchestrating digital thread with the technology which is compatible.

And it was always the challenge to orchestrate the data traceability, data access. And this is why it was the different like massive data management systems, MDM systems, ISO different data lake capabilities. Hyperscaler today are offering this type of capability as well. However, with the effort from the

PLM vendors on this platformization effort, — like what we see from Siemens, for instance, all this accelerator portfolio, the system 3D experience platform. PLM, PTC is also offering something as a one digital thread platform, intelligent PLM platform. ARAS is, I know, also trying to orchestrate digital threads across different disciplines. —

But the problem or challenge remains — what the actual data needed for the engineering tasks and how to improve engineering productivity. — Maybe those engineers does not need to have all the systems together in their fingerprints. They have need to have the data. So with the raise of semantic definition of the data,

it was easier to extract from different data models from different systems of record — that semantic definition into the abstract layer. And that abstract layer now become a — kind of a knowledge graph based umbrella on top of different engineering systems or systems of record. And machine learning capability run on this — record and

— and enable — this — ability for engineers to access it, then you can create multiple agents on top of it. So that's actually reshaping the approach, the system integrators — going forward with the managing multiple systems of record. That's how that — industry is changing.

— Enterprises also changing — their approach to create those — orchestration platforms. Just recently today, was with one large enterprise who is doing the change orchestration platform on top of their multiple PLM and ARP systems, where the different change capabilities get rationalized in not in PLM — platform.

So the question is whether PLM systems, current PLM systems, are ready for this product memory movement and whether they will find themselves, the vendors will find themselves compatible to those product memory — umbrellas that enterprises creating, regardless what kind of — vendor offerings they have. Little bit longer.

Michael Finocchiaro (17:38)

David, that's okay. You got here late, so you got an extra minute there. You had your hand up, Brion, but I actually was going to direct a question to you, because I think we're going to talk a little bit more in terms of a reality check, right? Like where does the product memory, where it breaks today, why it doesn't work? And so I was going to ask you in terms of integration, when a product, when product data lives across multiple systems and each system contains some piece of the

truth of the single source of truth. One system owns the engineering structure, another owns the manufacturing planning, another owns cost. How do companies avoid product memory becoming just another inconsistent layer across all these systems that are inconsistent?

Brion Carroll (18:21)

Well, that's the key. mean, it's kind of like if you wanted to be a vocal coach, you got to know how to sing, right? Ooh, that was weird. But yes, it's true. You have to learn how to sing. And if you don't have pitch perfect singing skills, you can't be a vocal coach, right? Just don't even bother. Don't walk down that path. Forget it. They don't want you. The point is the same thing exists. And David was going through

multi PLMs, multi ERPs and how each contributes as it needs to content that relates to either the foundation of a new product or part or sub-assembly or the change of a existing product part sub-assembly. And that goes to Ole. By the way, Ole, I never congratulated you for coming up with product memory in an open court, right? So here we are, congratulations. It's always good to have a, you should trademark it though, bro. You know what I mean?

Oleg Shilovitsky (19:18)

No,

no, no, no.

Michael Finocchiaro (19:19)

Except he's gonna get sued by Benedict Smith and Jon

Brion Carroll (19:19)

It's an option.

Michael Finocchiaro (19:22)

Snow, who are in the chat saying they talked about this in 2015 and 15 times last year.

Brion Carroll (19:26)

Yeah, I was talking about it in 1805. I

remember my grandfather brought it up.

Oleg Shilovitsky (19:29)

We are going to radio and television invention. I think it's the same part.

Brion Carroll (19:33)

Hahahaha.

Michael Finocchiaro (19:34)

I think there's probably a grave

somewhere with it, you know, in stone, but anyway.

Brion Carroll (19:38)

Right. So,

so, but going back to the, the anarchy that could exist in data moving from system to system, and especially when the data is common. I gave an example of retail price, but there's a whole slew. If you think of, I've got a description of what the product is, features and benefits in assortment planning or product line management. And that gets totally ignored when they post things up on the web. Well, there's that's blasphemy, right? The product description features.

benefits should flow through into e-commerce and that also should flow into product memory. So the key is you can't not post when transactions are occurring. As you're releasing products out of PLM, that might be the gate that says, okay, good enough, put it into product memory. Then it goes into the ERP. It gets funged around, new data gets added, posted to product memory, and then it goes into PIM or it goes into e-commerce.

or SEM, Supply Chain Management. Each has common data that's coming down the digital fluidity, right? Digital fluidity from mind to market. And each brings its own little bucket of stuff. And the product memory should be gated by what do we want up there? Not everything goes up there, but what do we want up there? And there has to be a ontology or a meta layer that says, whoa, that data should have been equivalent. I don't know who you are.

But get away from me, right? It shouldn't be allowed in unless it rules of equivalence, right? Or if it's something, and I think I'm not sure who talked about it, maybe it was Rob, is where, maybe it was you, Michael, where you have gender of clothing that in PLM is called men's, women's, kids and unisex. But over in ERP, it's called 00, 01, 02, 03, and is labeled sex.

Oleg Shilovitsky (21:11)

Thank

Brion Carroll (21:36)

You got to know what you're doing, bro. You got to know that gender and sex are equivalent and that the transformation makes it so that when it goes into product memory, we remember where you came from. We remember what you used to be called. And we're going to translate that back when we talk about insights and corrective actions and whatever. And so it all, really has to be that ontology layer or that meta layer that permits or doesn't permit content into product memory. That's how you get product memory.

David Segal (21:38)

you

Brion Carroll (22:05)

where you can trust it. And that's the key, right? You gotta be able to trust it.

David Segal (22:06)

you

Michael Finocchiaro (22:09)

— Rob, makes me want to ask you, why is the translation, because we talked about this the last call and you didn't join us, but why is it so difficult, this translation of the engineering view and the manufacturing view, because it's really the first breakdown that happens, That throwing it over the wall kind of thing. In your view, why is it so incredibly difficult?

Rob McAveney (22:26)

Yeah.

because engineers and manufacturing folks think differently. It's as simple as that, right? The engineers think in terms of systems and subsystems and manufacturing folks think in terms of which things do I need in which order in order to build a product. So yeah, the key is that the transformation happens in a way that — is traceable.

Michael Finocchiaro (22:37)

You

Rob McAveney (22:58)

And and is done in the context of something that can be added to to product memory and to maybe to add a little bit to what Brion was saying My position here is that the the the product memory should be all-inclusive Right that that everything every decision that's made Should be added to the product memory. However, there are different levels of trust and different levels of confirmation that can happen to different —

different records of a decision. So if I make a decision, if I have a chat with one of my colleagues and we make a decision that really doesn't have specific context, it should still be added to product memory. It should just have a lower ranking in terms of its relevance for future decision making. I also wanted to point out that

— The context for decisions is more than just the date and time and people that were making a decision, right? It's the role of those people. is the — factory that they're talking about or the the effectivity date that they're talking about, right? You can't just say, well, because a decision was made on May 1st at 1027 a.m.,

that we know everything about the context of the decision. Are they working on the work in progress design? Are they working on the release design? Or are they working on some future effectivity for a plant in Vietnam for a product that's being built for the Asian market for three months from now? All that information is critical to recording decisions and contextualizing for AI to

used downstream contextualizing the decision process and — really the impact of that decision.

David Segal (24:56)

you

Michael Finocchiaro (25:00)

Thank you, Rob. — I wanted to ask — Jonathan, he had his hand up, but I had a question for him anyway. It's all working out perfectly so far. So Jonathan, and some of the writing I read about just before — organizing the call, you made a point that it's critical that companies often treat engineering release as if it was the finish line, as if that's it, right? — But from a manufacturing supply chain point of view,

Brion Carroll (25:06)

I like the way he's doing this. Oh, you got your hand up. I wanted to ask you a question anyway.

Jonathan Scott (25:08)

You

Oleg Shilovitsky (25:13)

.

Michael Finocchiaro (25:29)

It's only the beginning. why do we get stuck on this concept of engineering release all the time?

Jonathan Scott (25:35)

That's a great question. I think it has something to do with where configuration management came from, right? And I'm not the scholar on the history here, but right? I I think about it in the broad terms that, know, DOD and industries that really cared about configuration management brought that into the mainstream consciousness. And they were thinking about the product definition, right? They were thinking about the engineering specification of it. And so we end up with these processes that came from that.

Michael Finocchiaro (25:42)

Okay.

Jonathan Scott (26:03)

you know, now organizations have grown that concept, right? We've expanded CM to think about more than just the engineering definition, but a lot of organizations are still stuck in that mental model. So it's the finish line is I've defined the product and now however we make it, we'll get that done. We'll put some gates on it, but we don't think about that as part of the full life cycle. It's ironic, right? Cause we talk about the life cycle, but we often get stuck at engineering. I...

David Segal (26:15)

Thank you.

Yeah.

Jonathan Scott (26:32)

I think that's where that's coming from, but I don't know, that's just my perspective on it. The thing that I wanted to jump in on was exactly what Rob was talking about at the end. — And it ties back to the memory concept. I fully agree, Rob, with your point about you need more than the date and time where you decided this thing, right? I think you also need more than the simple facts, right? What the decision was. That's where I believe we have to be careful with product memory. We...

We need to start by capturing the facts, right? And that's Brion, to your point, in a way that we all understand using the same language, master data and ontology, that kind of thing. But we need to think about what else goes into make a real memory. It's not just the result. It was the reasoning that got us there. It was the reasoning that didn't get us there, right? Now, Brion, you made another great point too, which is be careful how much we stick in product memory because it starts to become the haystack where we can't find the needle.

David Segal (27:28)

Thank you.

Jonathan Scott (27:29)

And Rob's point about, you have to think about relevance

is good too. But what I'm getting at is when an engineer, a purchasing person, manufacturing engineer makes a decision, we need to capture what they were thinking. When they said, you know what, I looked at this, this, and this, those are the pieces of data. That's the context that Rob talked about, right? These are the manufacturing part numbers I was considering, but I chose this one because it's the because we need to figure out how that fits into product memory as well.

And the reverse, right? The negative of that. And I didn't choose this one because, you know, that was — halfway around the world and I was worried about supply chain issues and so that's why I didn't pick it. — those pieces of information are what's going to be really valuable when we try and reason across the memory later, in addition to the facts, right? The connect up the real pieces of data.

Brion Carroll (28:18)

This is a good time.

Michael Finocchiaro (28:20)

It

makes me think sort GD &T and how all that design intent of it's not pulled out of the drawings and put into the PLM gets completely lost and we don't know why we're doing things. I'd like to steer that over to David and then to Oleg, because we haven't heard from them in a little while. David, do you think we should stop thinking in terms of engineering change and think more in terms of enterprise change to fix some of these problems?

Jonathan Scott (28:32)

Intent, that's a great word.

David Segal (28:33)

you

you

Thank

Enterprise change, obviously, is the dynamic that — large organizations are going. And in my view, when product memory will be effective is to support this enterprise change for sure. And in fact, for engineering change, current systems addressing it quite effectively.

And — this is where those decisions, what Jonathan is talking about, — could come together. The reasons of those decisions, it's coming from the different enterprise systems or applications or tools or whatever — exists in enterprise, not necessarily PLM systems. So that's aggregation of information as the enterprise change. This is what —

could become this — kind of platform for this product memory in my view.

Michael Finocchiaro (29:55)

Thank you, David. We have another two, three minutes in this section. — Oleg, how would you... —

David Segal (29:57)

Ahem.

Michael Finocchiaro (30:05)

I'm trying to pick one of these in here for you. — What kind of product memory is missing in this handoff between the different department? What's the data points that we need to... Because we were saying we don't want to put everything up, But how are we going to decide what data goes up in product memory and what stuff stays down in the in-work world, right?

Oleg Shilovitsky (30:31)

You're kind of reading my mind. I don't know how you do it, by the way. So you probably have something about memory, right? Yes. Yeah. I was kind of — thinking about this from a perspective, also understanding what organizations do.

Michael Finocchiaro (30:31)

There you go.

Brion Carroll (30:36)

Hahaha.

Michael Finocchiaro (30:37)

I have superpowers. I just have superpowers.

Oleg Shilovitsky (30:48)

But also from the perspective of like, we don't know what we don't know. I mean, just remind like Google moment was very strong in my past. So when someone asks Sergey Brin, why are you scanning all these websites? He I don't know, but we will figure out like why you indexing all these websites. I think that was the interesting observation. So I was looking at the company and what is happening. I put four pieces that are

David Segal (31:14)

you

Oleg Shilovitsky (31:16)

kind of unclear and lost. I wrote them down. Like, if you think about this, like the first of all, there are decisions that live in people's heads today. So we get these decisions, we make these decisions, they are not recorded anyway. Like you can decide to switch from supplier A to supplier B. We made a change, we've discussed it, it's over. Okay, so it's not captured. So no one remembers it, the people left, nothing. So that's the first thing.

So the history of our decisions, for example, we made a replacement. We made a change. The enterprise change management that David is talking about applies to this. So we made a change, but we don't remember why we did it. So that's the previous decisions that not captured. And then — there is a synthesis of information that comes from multiple systems. And at that moment of time, based on this, we're making any decisions. So imagine of the

sandbox of the materials that information pulled from multiple systems that we make in decisions. We apply changes in multiple systems and the entire decision point disappears vaporized. don't have, it lived in that Excel spreadsheet that I sent between people, you remember, and that was the synthesis between different systems. we made a decision, like where is the spreadsheet? I don't remember, like it's gone, okay? And... —

Michael Finocchiaro (32:37)

You're getting

a lot of thumbs up and little things flying in the window just to tell you that you're hitting good points.

Brion Carroll (32:43)

It's uplifting.

Oleg Shilovitsky (32:43)

I mean, cannot... And the last thing,

and the last one is that — different actions and activities that are taken outside of the systems. So for example, someone approved something outside of the system, it disappeared. So I'm thinking about all these things that are happening in different places and organizations. They're happening in different — systems and organizations and the different media.

And we currently have such a strong technological capability to capture in different forms and build what many people today call a context graph. So this context graph is accumulating all this information, accumulating them without immediately saying, it's right or wrong. We just captured this. And once we are accumulating this information, again, going back to this Google moment, accumulating this information, we can come to something very interesting.

that we never thought their organizations about their decisions, about how they manage products and what's with the product. Because those things today, not capture it, and second, disconnect. As wise we capture them and they connect them, then the magic will happen.

Michael Finocchiaro (33:57)

Jonathan, I wanted to come to you — on change management. A lot of companies want to go faster. And so they try to remove steps in the process. And I believe you've said in the past that that could be very dangerous to remove the steps. — So why is skipping an ECR or some other steps going to actually slow down the enterprise as you're trying to build this product memory and accelerate things as fast as possible? Why is that?

Jonathan Scott (34:22)

Yeah, it's counterintuitive, but I think it's because people don't actually skip the steps. They skip the steps in the system, right? They say, you know, I don't need to evaluate this. I don't need to check impact analysis. this is a simple one. You know, this isn't going to matter. So don't worry about that step. The reality is they did reason through it. They did the step in their mind, but they never captured it. And so we miss it. And it's what Oleg was just talking about. All the actions and activities that happen that we say are insignificant.

are maybe insignificant to the expert, but they're very significant to the person who's trying to learn the process, understand the process, or repeat the process. And I think that's what a lot of companies miss. I mean, I see it all the time in implementations. You go to talk to a customer about how they want to implement engineering change, and the senior people who've been there a long time, who have product memory, they're saying, I don't need all these steps. I don't need to do all this formality. Because for them, it's sort of embedded in how they operate.

But if you ask the people who've been there two or three years, they would say, yeah, I need the steps. I need the guidance. I need to remember how it goes, what we check, what our checklists are. And it's an interesting observation to me that as I see different companies with different characteristics, right? Where they've got workforces that are aging out versus they're new and dynamic, they have very different patterns in terms of what they think is important. And it relates exactly to what you said. They see the value of going slow to go fast.

by saying we take all the steps. We try to make them quick, but we do take all the steps.

Michael Finocchiaro (35:57)

Jonathan, Rob, I wanted to switch to you because — one of the examples you've shown is how AI helps translate requirements, regulations, and business needs into PLM models and workflows, which means it's a sort of different role traditionally than we've been thinking about for AI, right? Instead of answering questions, it's actually evolving the PLM system as a sort of living entity, right? So where can agents reduce the implementation burden without

weakening the whole governance and the trust that we need to build inside the system. Sorry, big question, but I think you can handle it. —

Rob McAveney (36:31)

Yeah.

I'll give it a shot. So I think part of the promise of AI is not just about — being able to navigate large data sets and answer questions, but being able to — turn data into proper data sets, to turn unstructured data into a proper digital thread.

And I think the value of that is really underestimated. yeah, the requirements ingestion is just one use case, but the goal there is — not only to make it more convenient to turn a regulatory requirement or a customer documented requirement set — into data, but along the way to refine that data, to ask good questions.

And I think the part of the product memory or — a well-functioning product memory system, so to speak, — would be — behaving, — first of all, coming to the user, not just not making the user go to a system, but having the system come to the user where they are, be it in Teams or Slack or Excel or Word or CAD, whatever it is, the system should come to the user. —

— AI behaving like a three-year-old when importing requirements asking why. Why did you import that requirement at this time? Why did you — decompose that requirement this way? Why — did you connect this requirement to this product line? And if we can then be active in terms of capturing the product memory instead of simply relying on

— on contextual clues and capturing — a chat log. That's of course one way to do it, we should be doing that. But if an individual is making decisions in their brain and isn't prompted to write that decision down and the context behind that decision or the reasoning behind that decision will never capture it. And so I think it's really, it's bring the AI to the user.

— Ask good questions and use the AI not just to build a better digital thread, but also to capture the reasoning behind decisions being made.

Michael Finocchiaro (39:08)

Thanks, Rob. And I wanted to also thank the 15 to 18 people I see live. mean, it's going up and down. It'd be a lot more than that, but thank you everybody for listening. There's quite a lot of comments and I think my panelists will be very glad to jump on those after this call. I also want to point out that AWS is sponsoring this. So if you want to click in the link down in the comments and download their white paper, that'd be really cool.

— obviously we're going to need to put in place guardrails before AI is allowed anywhere near the actual execution. But — how can we make those agents more reliable and how can product memory be used actually to make those agents more reliable?

Brion Carroll (39:48)

So, we've gone through two, three speakers since my original hand was up, it's kind of morphed into a different answer or question. So I like where you are because agenting, let's say what Rob talks about going from requirements with some — semantic understanding into design 3D, right? —

Michael Finocchiaro (40:02)

Which is the point of the discussion, right?

Brion Carroll (40:17)

That now becomes a resource as to why. You took these requirements, you created this design starting point, the key question is why, right? So AI — agents should not be off the hook on putting their decisioning into a, this is what I did and why, into product memory, right? So we can't forget.

Actually, by the way, I'd like to state one thing, which was the way back hand up is equivalence of organizations is going to be the result when product memory becomes the harmonized content from all different systems. You know, if you look at investment, ERP is like 46 to one on PLM, right? Nobody knows why except ERP makes money because it makes products which generate revenue.

But people forget that no new products will exist if they choke off the engineering people or the creative group. So all organizations should be looking at how to make an equivalence in funding. But that aside, by taking product memory and having it be the correlation and the harmonization of all these other systems and agents and all things going on.

So that you could do machine learning, as David said, machine learning against that to create AI insights. That is going to be the empowerment of the business where all people, it's almost like a chorus. You know, when I was a kid, I was in chorus and who the hell knows why, but I was in chorus. And it was all these people that really had no recognition as being humans because they were in school. And, you know, the little people didn't have friends in the

overweight people didn't have friends, but when they were in chorus, they all became one, right? And so it's kind of like product memory is going to become the chorus of the organizations. And I think that that's really going to empower a different view of why people will begin to contribute because they know it's going to go into product memory. It may be forgotten over here, but it's going to go into product memory and that will drive other things. So I think people should look forward to product memory and its ability to take all that content.

and harmonize it so that new decisions, new insights, new opportunities can avail from the smallest of pieces of data that correlates to other data.

Michael Finocchiaro (42:49)

Thanks, Brion. In one minute before we switch chapters, I wanted to ask you whether AI agents are summarizers or are they content curators in this case?

Oleg Shilovitsky (43:01)

Well, that's great question. So what I've seen, and this is one of the articles that I wrote, I presented a sort of product memory flywheel that takes and moves between three steps, and they go in and repeat. So it's one of them is capture. This is what many of you know, talked how we can capture all these different pieces of information together.

And then we go into the different review stages. So this is where people can observe and where people can make decisions, where people can — reason on top of this memory.

And then we go to the flow and distribution because some outcome of this reasoning and some outcome of these actions can go to different systems as a result of this. And what I see is that this flywheel is spinning and the faster it's spinning, the — more memory is built and more efficient it's coming. So over time, becomes more and more — quality comes out of this out of these activities and agents

are acting against this context graph or against this product memory. So that gives them a space to be efficient for different reasons. So you can take a look and think how you distribute data, the different elements of digital thread. You can think about making decisions in the context of the enterprise change management that David presented. So it can be capturing conditional pieces of the dependencies graph that

would say, so I can bring all these examples together. what I found very interesting is the spinning of this flywheel brings more memory and makes more connections.

Michael Finocchiaro (44:58)

Thanks, Oleg. — And I like the visual flywheel thing. That's really helpful. — Thank you. — David, I'm coming back to you. I wanted now to switch to the next chapter, the chapter before last, and I'm going to call that architecture. What does product memory require? And I'd like to ask you, — when you're working with large manufacturers that obviously cannot rip and replace their PLM, ERP, PQMS, and all these systems, they have to work with the existing landscape, right?

So what has to be architecturally true before they can actually start realizing something like a product memory?

David Segal (45:35)

And before I will answer this question, I would like to even complicate the situation a little bit more. So, know, three minutes, no long speech. Look, I was last week at Hanover Messe and Hanover Messe was about all AI, but three different types of AI, industrial AI, physical AI, and agent AI.

Brion Carroll (45:43)

Good, good, we like that.

Oleg Shilovitsky (45:43)

It was already complex.

Michael Finocchiaro (45:46)

You only get three minutes, so it better be quick.

David Segal (46:04)

Industrial AI is more like incompassive. What we talk about could be product memory. Physical AI is all in robotics, right? So we talk about product memory. Now we are not speaking about the actual product. It's actually moving and — performing product, right? It's a product that actually has — a memory on the age. It has an AI in the age. — So all this data get accumulated.

Then there is a agent API, it's multiple agents that actually — collaborate. And there was a subject of agency, right? When you actually have to manage multiple agents coming from multiple vendors that would perform one — task. So speaking of architecture, it's not about — kind of systems of record architecture anymore. It's not about the architecture what would hold

and orchestrate workflows or data flows. It's an architecture that actually could — handle all the different aspects of — not only knowledge graphs, not only semantic definition for systems of record, but also the data coming from the real time systems like IoT systems, systems that actually running the physical AI. So if it's actually broadens that subject

of product memory. And I'm not only even touching the subject of IP rights of this, which will put maybe it's a subject for the entire discussion. But if we speak of the real deployment of product memory, it should have its definition of architecture taking in consideration this — different aspects, obviously multi-layer approach. And it should be one thing. It should be dynamic.

and purpose-build real-time — capability that would address the immediate momentary need of an engineer or user who will actually access this part of memory for a purpose.

Michael Finocchiaro (48:21)

From an implementation standpoint, product memory depends on shared meaning, right? And you've mentioned this before on our calls. If one system says item and another says part, and you were using an example earlier, how are we going to establish the semantic consistency where it's enough for product memory to actually be trusted? That it knows that an item is a part.

Brion Carroll (48:22)

what's that?

See, that's the...

Yeah, I know.

Michael Finocchiaro (48:46)

or skew

number or whatever, right? Depending on this is how do we.

Brion Carroll (48:48)

Well, that gets into the

meta layer, what I'll call the meta layer, right? Which is as data is coming in and being ingested into product memory, let's call that an ambiguous system. It could be a graph database structure. It could be any number of things. And David was kind of pointing to the fact that it could be, you know, a harmonization of many different things all encompassed in an umbrella. We've used that term before, But the fact is no data.

no data should ever go into product memory unless it's been vetted, period. And the point is you vet content on the doorway in, you don't try to fix it once it's in. So let's say somebody is sending and we'll go back to that gender example where gender in PLM becomes sex in the — ERP and this male becomes zero zero, female becomes zero one.

If ERP would have sent up a 07, it'd go, ho, we'll, whoa, whoa, whoa, sex, is no 07. I don't know where you're coming from. And reject that to be repaired, resolved, and fixed by whoever is manning the ERP, right? Or if it's an, and if it's an energetic AI thing going, oh, trust me, we do, do, then that would cause someone to say, we got to fix that AI utility.

But it has to happen before data goes in. It can't happen after data crosses the threshold. And that's where product memory should always be, always be a proven set of content that can be trusted. Now, if AI is running on top of it and generating insights, you'd want an explanatory AI that says, hey, by the way, Bob, I got this insight that you should never sell these products in this region because the pricing you have is

you know, counter to what they expect. And I know this because bing, bing, bing, bing, bing, right? And the bing, bing, bing, bing, bing should be explanatory content derived from product memory harmonized to a standard and delivered in the vernacular of the human that it's talking to. It shouldn't say sex if the human is expecting to see the word gender.

Well, how do know that? Well, it's the profile of the user. So there's a lot of things that happen here, but what I love about product memory is that it can grow as system intimation grows. So if PLM and ERP are now intimated, that means intelligently integrated and no data based on data governance is expected between both systems to change without the other being known. If you started there, that becomes your product memory. Now, if you had SEM,

Michael Finocchiaro (51:09)

Thanks, Brion.

Brion Carroll (51:39)

and intimate it to ERP and PLM, that now becomes part of product memory. So you can literally increment your organization to contain product memory as a growing set of content. Again, going back to it, these all become equivalent influence factors to insights. So everyone represented becomes part of the overall equation on the top. And that creates the equivalence of, don't care if you come in from SCM, ERP,

PLM, assortment planning, — e-commerce, you're all equal when you get in here. And that's the key.

Michael Finocchiaro (52:15)

Thanks, Brion. —

**Rob, just to close out this chapter before we move into the final chapter, I wanted to ask you, because we were talking about the agentic framework earlier, — a copilot can answer questions from documents, but an agent that recommends or executes changes needs something much stronger. It needs relationships, permissions, constraints, impact logic. So what does a dependency graph need to know before AI can safely act inside a PLM with agency?

Rob McAveney (52:44)

needs to know everything, of course. I think we should recognize that data is rarely perfect. — And even our understanding of the meta-layer, as Brion calls it, or the ontology is also imperfect. my position would be that — the data, our goal should not be to — have a very strict —

Michael Finocchiaro (52:45)

Yeah

Oleg Shilovitsky (52:46)

Good.

Rob McAveney (53:14)

gateway to, to, — to store — perfect data. Our goal should be to take imperfect data and try to make it more perfect over time. And I would say that the same thing is true of the ontology. If in fact, there is an equivalence between gender and sex, then we should, we, if we don't, if we don't have that already stored in our ontology, that we should, we should be able to ask the user.

are these things equivalent? And not just ask one user, but ask a dozen users their interpretation of this question, such that we can improve on the ontology. And then as we have that virtual cycle or the flywheel in OLAG's terminology, the flywheel will create better and better data over time. Now, as it relates to the dependency graph, and I know I'll keep it short here because I know we're running long.

But the dependency graph is then — both a source of context for the decision traces, but also a place to store some of the resulting decisions that are made. So by making a decision that a part or a document is affected by a change,

we are creating a relationship and a dependency between that change and the data that it's impacting. And so it's again, it just becomes this virtuous cycle of the more data or more decisions we collect, the better data we get, more questions we ask, the better understanding of the ontology we get. it becomes over time, — the product memory becomes much more

much more perfect than human memory.

Oleg Shilovitsky (55:11)

Yeah.

Michael Finocchiaro (55:12)

— Nice. So Jonathan, we got last chapter about ROI, maturity and first steps. Although we don't have a lot of time, I was going to go to Jonathan and Oleg and then let David, Brion and Rob comment before we end. — So in terms of people management, because all of this also has humans in it, a company could build a sophisticated product memory architecture based on some principles that David outlined and some of the ideas that Rob also talked about.

Brion Carroll (55:14)

Thank

Michael Finocchiaro (55:42)

But if the organization can't absorb the change, then of course, like so many other systems, it's just going to sit on the shelf and I'm just going to use it. So what is the human maturity metric? How do we know whether an organization is ready for product memory?

Jonathan Scott (55:56)

That's a great question. And I think it does speak to the human piece of this. My experience with AI today, right? And I know we're not talking about just AI, but I think it's a good proxy for this, is different people are operating at different speeds, right? Some people are ready, some people are eager, some people are waiting, some people are scared. And we have to think about that when we think about this product memory idea and how to introduce it to an organization. Because I think it was David who mentioned IP earlier, right?

Brion Carroll (56:17)

Thank

Jonathan Scott (56:24)

That's one of the scary things. lot of people, knowledge workers in jobs today are thinking, wait, am I going to share all this knowledge and reasoning and logic that I have with systems that will capture it and then make me obsolete? So, you know, it's not just the pace of change. That's the easy answer to your question, Michael, right? Is when we keep changing things constantly, organizations get fatigued and it's hard for people to adopt the new thing. But there's also the fear. So I think it's both. I'll be quiet because we don't have much time, but.

Those are slowing us down.

Michael Finocchiaro (56:56)

Awesome, thank you. So Oleg, let's make this practical. — If a company wants to understand where product memory is missing today, and they don't want to do it over an entire year, what's like the first exercise they could run in order to assess — where product memory is missing? Other than installing OpenBOM, obviously, because... Just keep going out on the side. —

Oleg Shilovitsky (57:15)

Well, that's...

Brion Carroll (57:18)

you

Oleg Shilovitsky (57:20)

I don't know how you come to this idea.

But I divide into two different pieces, this is important. And one comes from what Rob mentioned before, now the second one coming from Jonathan. The first is how do we do it without people? And second, how do we integrate people in? And I will explain what I mean by this.

So, know, one of the most, yes, one of the most fascinating thing in the Google was how to exclude people from the decisions and how to capture everything without people. So how to capture data from different sides that you do not control. You remember famous, this BLM standard decision. Let's may have standards, yes, and I have one, So that was the standard conversation. That will not work. And the first is how to bring data

Michael Finocchiaro (57:49)

and two minutes for less.

Oleg Shilovitsky (58:17)

in the spinning wheel, like it will bring data and the memory will become better over time. So I will stop here, but this is the one important thing. It's how to make it without people because people will not agree. That's if anything I've learned for the last 25 years, people will not agree. So let's figure out how to do it without them. In other words, how I can index your website that I don't know how it built and what data structure it used and what technology it's based on. So that's different. Without this, it will not work. So it's how to get...

Michael Finocchiaro (58:34)

Hahaha

Oleg Shilovitsky (58:47)

take people out. Now the second is more tricky and more interesting is about how to include people and not to scare them because systems are learning from you. And now there is a very interesting thing that I observe is that AI is learning from you. Organizations can come and say, now get out. We don't need you. We already know you. So we already know how you make decisions. It's a very interesting lock-in that might happen. Like it's more than lock-in in cat format that we all know.

It will be locking in your decision mindset. So if you can learn how you make decisions, great, the system already captured you. Thank you very much. You can go. So that's a scary moment. But those are two things that we need to balance. First, how to exclude people. So we will get data. will get the context. We will collect all this information. This is where people should not be stopping. And the second is how to give the protection to people and IP and what they do. I think it would be a tricky balance to manage, but this is what I believe it's it.

supposed to go and I think this is only two minutes so I can stop here.

Michael Finocchiaro (59:51)

Okay, David, you got 30 seconds. Do you want to talk about the first, where people should start?

David Segal (59:57)

First of all, do think that product memory is a practical subject. As much we're trying to rationalize it and see from where we will start.

But we started already. All those semantic definitions that we do, all this elevation of the data from those systems of record to the agnostic kind of umbrellas, right, call it whatever you want. But that's the first step. And certainly I envision that it will be product memory for engineering, product memory for manufacturing, product memory for other things, right. I just mentioned all this different robotics and physical AI, which will require

a different type of product memory. But I think — speaking practically, — this product memory could be a good approach that different vendors and companies who actually have their roadmaps to — grow their AI capability, they can target. And with the practical sense of make it actionable for different roles in enterprise.

And you say that, as Oleg said, it may be not for human, but adoption will happen with the human first and then agents. So it's agent plus human always, but we need to make sure that this product memory subject will be appealing to engineers that will see it's productive for them, which I do it is.

Michael Finocchiaro (1:01:29)

Thank you, David. Brion, you want to add to that? And then Rob, and then we'll probably need to say goodbye because we're a little over.

Brion Carroll (1:01:35)

Yeah, yeah. I'll be very quick. I know that's weird, but I will. — There you are. The solid limb, bro. It's a solid limb. So I already talked about the fact that product memory should be an incremental solution. As systems become intimated and data becomes trusted, this goes to Rob's point, you know, it'd be great if the ontology or meta layer filtered out

Michael Finocchiaro (1:01:39)

I'm taking a big risk. I'm going out on a limb, right? Just don't saw the limb off while I'm standing on it.

Brion Carroll (1:02:04)

things before that went into product memory that would not be confusing, would not have to be reworked, right? But, know, hey, it all depends on where you're expecting that product memory to have use, but it can be incremented. where, Michael, asked, where do you start? Start where they're intimated. If you've got ERP and PLM connected, use that. Begin to, you know, poke around with how to do product memory and from product memory, how to develop insights and from insights, how to...

know, correct the ship and only you bring up and I think Jonathan also brought up people becoming afraid that they may become outdated or no longer useful. That's not going to happen. The reason is because new products are going to be formed and new insights are going to be developed by the gray hairs, right? — And from that you have a better roadmap. And when people leave,

which should be retirement or I want to go to the new job, others that have learned from them will replace them, right? So the mentorship model is something that will keep people engaged. The new products will keep people in business. So let product memory be what it is supposed to be, which is a memory of how products evolved over time and let that be. Cause you got libraries, but we still speak, we still tell stories, but there's a, — Donald said something where there's a ton of stuff.

Michael Finocchiaro (1:03:33)

Ha

Brion Carroll (1:03:33)

There's a ton of stories out there and they just keep coming, right? Nobody stops writing fiction or nonfiction. So don't worry about just work, work, work, and let your memory be something that adds value to the business. Thank you and good night.

Michael Finocchiaro (1:03:47)

Thanks, Brion.

Thank you, Mr. Cronkite. Rob, do you want to jump in for last word?

Brion Carroll (1:03:53)

No

Rob McAveney (1:03:58)

Sure, I'll be brief. So from my perspective, the pragmatic approach to beginning to capture product memory is to go where the decisions are being made. Go to where the design review meeting is happening, go to where the Slack chat or Teams chat is happening and meet the users where they are. Begin to capture — the decision traces in whatever format is — most convenient.

Don't wait for a system to be in place. Don't wait for — a plugin for teams that's going to automate a whole bunch of data capture. Don't wait for all that stuff. Start capturing it. Start cataloging it. And over time, you'll find that the data that you capture will find a home — in the systems that are being developed right now. It'll find a home.

It'll be connected to your digital thread. It will be — the product memory that you're looking for. Just don't wait. Start doing.

Michael Finocchiaro (1:05:03)

That's great. I love the call to action there, which is what you want to do at the end of a call. Speaking of calls to action, our sponsor AWS would like you to click on the link and download a white paper. Thank you once again so much to my panelists, Brion, Oleg, David, Jonathan, and Rob, if I go counterclockwise around from what I see. I hope that you guys had a good time. I thought that was really cool. I thought it was a good discussion without too much interruptions. Thank you very much also.

Brion Carroll (1:05:26)

Yeah, yeah, that was good.

Michael Finocchiaro (1:05:32)

We haven't really decided on the next subject. guys, oh, by the way, once, and I think I said this earlier, but please panelists and listeners, please interact on the chat because there's actually about 20 or 30 questions already, which is really cool. was a lot of interaction. One person was listening to us on YouTube. I apologies to that one person. Actually, there's two people I see on there. A lot of discussion is actually on LinkedIn. So, you know, I just tried to give it as many platforms as possible, but the discussions are more on LinkedIn.

Jonathan Scott (1:05:46)

Awesome.

Michael Finocchiaro (1:06:02)

Once again, I'm not sure what we're going to talk about next time. Maybe we'll continue this, maybe this murky world between engineering, manufacturing. was talking to, do you guys remember last week when we were talking to David Schultz who comes from that MES world? He was talking about defining eBOM in terms of ISO 95 so then it becomes natively translatable to the manufacturing side, which is all ISO 95 level three. So that might be an interesting topic to explore a little bit because semantically

Brion Carroll (1:06:14)

Yeah.

Michael Finocchiaro (1:06:29)

That could be part of that product memory. Then that way we're capturing it in the language that the next system is going to understand, for example. exactly. That's part of it, but not all of it. Thanks everybody. This is a great episode. I hope that you all enjoy it. I hope you click on the link down in the comments and we'll see you next time. Probably what? Three, four weeks, maybe end of May and we'll see you next time. And once again, many thanks to Rob for taking time to join us. David as well, our special guest.

David Segal (1:06:35)

UNS, Unified Namespace.

Brion Carroll (1:06:36)

Right.

Michael Finocchiaro (1:06:59)

And Oleg and Brion and Jonathan, as always, thank you so much. It was awesome. Cheers to everybody.

Jonathan Scott (1:07:03)

Thanks everybody.

Oleg Shilovitsky (1:07:04)

Thank you everyone.

Related Episodes

🤖 Ep. 29

Design Faster, Source Smarter — with Bananaz and Jiga

🔮 Ep. 12

EBOM vs MBOM: Bridging Engineering and Manufacturing Bills of Materials

🤖 Ep. 26