Pillar Topic · PLM

The canonical answer to 'what is PLM' — definition, what it is not, the big three vendors, the disruption wave, and where the architecture is heading.

Key Takeaways

- PLM is the system of record for product data — BOM, change, configuration, lifecycle state.

- PLM ≠ PDM (which manages CAD files) and ≠ ERP (which manages transactions and inventory).

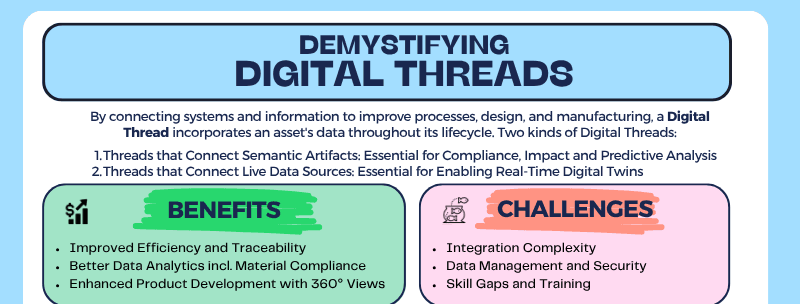

- Modern PLM is moving from suite-centric monoliths to thread-centric architectures.

- The digital thread connects PLM to manufacturing, service, and customer data.

- Agentic AI changes the PLM operating model — agents read the BOM, route changes, and update the system of record.

Short Answer

Product Lifecycle Management (PLM) is the system of record for everything that defines a product: its bill of materials, its configurations, the changes it has gone through, and its state at every phase from concept to retirement. It is distinct from PDM (which only manages CAD files) and ERP (which manages financial transactions). Modern PLM connects engineering, manufacturing, and service through a governed data thread.

- PLM governs the BOM, change history, configurations, and lifecycle state of a product.

- PLM is engineering-led; ERP is finance-led; both must coexist.

- The big three (Dassault 3DEXPERIENCE, Siemens Teamcenter, PTC Windchill) dominate enterprise PLM.

- Cloud-native and AI-native challengers are attacking specific PLM gaps.

- Thread-centric architectures keep PLM Core narrow and let best-of-breed tools attach via MCP.

Why it matters: Without PLM, the answer to 'which version of which part went into which product on which date' is a forensic exercise across spreadsheets and emails. With PLM, it's a query. Every cross-functional question — change impact, recall scope, regulatory traceability, AI training data quality — depends on a system that knows the truth about the product.

What PLM Is

Picture a centrifugal pump. Not a glamorous product — a mechanical-engineering classic. To design it, manufacture it, certify it, ship it, and service it for the next twenty years, an engineering organization has to wrangle a startling variety of data: CAD geometry from at least two vendors (a SolidWorks impeller, a Creo casing, maybe a NX shaft assembly), a requirements document the customer wrote in Word, simulation results from a CFD tool, a bill of materials with three hundred line items, a stack of supplier datasheets, regulatory test reports, an installation manual, a service-bulletin history. None of that data lives in one format. None of it lives in one tool. And all of it has to stay consistent with itself as the design changes — across two engineering sites, four suppliers, and the inevitable mid-program scope shift.

Product Lifecycle Management is the system of record for the product itself — the place where the bill of materials, the change history, the configurations, and the lifecycle state of every part live under governed control. Without it, the answer to "which version of the impeller went into the pumps we shipped to the customer in Q3?" is a forensic exercise across spreadsheets, emails, and someone's desktop folder. With it, that answer is a query. PLM is engineering-led — it owns the design intent — and it sits next to ERP (which owns the financial transactions) and MES (which owns the shop floor), not in place of them. The three coexist; they don't substitute.

Two readers tend to find their way to this page. The first works at a brownfield manufacturer — the company has CAD seats, an ERP, a fileserver full of drawings, and no PLM, and somebody has finally said the quiet part out loud: this doesn't scale. The second is planning a greenfield IT stack and trying to avoid the mistakes the first reader inherited. Both should know up front that "PLM" looks very different depending on what you make. A discrete-manufacturing PLM (the pump above, a tractor, an electric vehicle) is dominated by BOM structure, change orders, and CAD interop. A process-industry PLM (food, pharma, specialty chemicals) is dominated by formulations and recipes. A construction or BIM stack solves a similar problem with different primitives. Natural-resources operations — AgTech, mining — bring asset management and field telemetry into scope. And inside discrete manufacturing alone, the PLM you need depends on whether you are highly regulated (aerospace, medical devices), highly variable (fashion, consumer goods with seasonal SKUs), configure-to-order, engineer-to-order, or build-to-stock. Same category name. Very different software, very different processes, very different vendor shortlists.

Three facts about PLM that everything else depends on:

- PLM is the system of record for the product itself — what it is made of (BOM), how it has evolved (change history), what configuration is valid for which customer or market.

- PLM is engineering-led. ERP is finance-led; MES is shop-floor-led; PLM owns the design intent.

- PLM governs change. Without governed change, every other system downstream — manufacturing, service, regulatory — is operating on assumptions.

What PLM Is Not

PLM is often conflated with adjacent systems. The differences matter.

PLM ≠ PDM

PDM (Product Data Management) manages the CAD data — and only the CAD data. It versions files, controls check-in and check-out, holds the assembly structure that the CAD system understands, and stops there. PLM is the broader category: at a minimum it manages product data, items and attributes, product structures and quantities (the engineering BOM), change management, configuration management, requirements, and metadata access rights. PDM is a subset; most enterprise PLM systems include a PDM layer underneath.

The cleaner way to think about the boundary is by ambition. PDM has no ambitions outside engineering. PLM has explicit ambitions to extend across the full lifecycle — into manufacturing, service, and end of life — but in practice it usually gets overruled at the seams by neighboring systems: ERP for operations, MES for the shop floor, CRM for the customer relationship, MRO for service. Where the org chart draws those lines is where PLM's reach actually ends.

See: PDM-to-PLM history.

PLM ≠ ERP

PLM and ERP own different layers of the same product. PLM owns the product definition — the disciplines of ideation, design, engineering, simulation, and (ideally) manufacturing engineering. ERP owns operations: resources, finances, HR, purchase orders, inventory, and the transactional side of running a business. The technical artifact at the boundary is the BOM. The engineering BOM (eBOM) lives in PLM; the manufacturing BOM (mBOM) lives in ERP or MES. Every major PLM platform offers a way to derive the mBOM from the eBOM — and yet, in the real world, most of that conversion still happens in Excel because it is easier and more common. The digital thread fractures at the engineering-manufacturing seam, and the work of stitching it back together is sadly manual and error-prone. This is an active debate on The Future of PLM podcast.

The split is also a religious debate, not just a technical one. The eBOM-vs-mBOM line is a turf war disguised as an integration problem, and it gets sharper at the edges: PLM does not always manage CAM well, manufacturing engineering frequently sits in a no-man's-land between the two systems, and the structural disconnect between engineering and manufacturing is reinforced by a philosophical one — engineering optimizes for design intent, operations optimizes for throughput. Modern enterprises ship integrations between PLM and ERP and call it solved. Look closer and you usually find a spreadsheet doing the real work.

PLM ≠ MES

MES (Manufacturing Execution System) is the execution layer to PLM's definition layer. MES owns the bill of process, the tools, and the execution of the work instructions on the shop floor. The work instructions and process plans themselves can be authored in PLM or in MES — that authorship boundary is one of the more contested seams in modern manufacturing IT — but they are always executed by MES (or by ERP, in less mature stacks). PLM tells MES what to make and how it should be made; MES tells back what was actually made, when, by which operator, with which tool, against which revision. That feedback loop is where the digital thread becomes real instead of decorative, and it is increasingly the focus of investment for any manufacturer serious about traceability, yield, or AI-grade training data.

Core PLM Capabilities

Six capabilities sit at the center of any serious enterprise PLM system. None are optional. The depth and sophistication of each varies wildly by industry — the BOM tooling a fashion brand needs is not the BOM tooling an aircraft engine maker needs — but the categories are universal.

1. Bill of Materials (BOM) Management

The BOM is the spine of PLM. Everything else hangs off it. A BOM is a structured, multi-level list of every part, sub-assembly, raw material, and (increasingly) every piece of software and electronics that goes into a product, with quantities and relationships. PLM manages multiple views of the BOM for different audiences — the engineering BOM (eBOM) reflects how the product is designed, the manufacturing BOM (mBOM) reflects how it is built, the service BOM (sBOM) reflects how it is maintained — and the bridges between them.

Example (the pump): The pump's eBOM has 312 line items grouped by sub-assembly: impeller, casing, mechanical seal, motor, mounting hardware. The mBOM regroups the same 312 items by build sequence — frame first, then bearings, then shaft, then impeller, then casing — with consumables (gaskets, threadlocker, lubricant) added that the eBOM does not track. Example (apparel): A jacket's "BOM" is a tech pack — fabric yardage, trims, thread color codes, label placement, size grading curves. Same primitive, completely different shape.

2. Change Management

Engineering change is the most-litigated process in any manufacturing company. PLM governs it through a three-stage flow that is functionally identical across every vendor and every industry: an Engineering Change Request (ECR) raises a problem or proposes an improvement, an Engineering Change Notice (ECN) describes the fix and routes it for review, and an Engineering Change Order (ECO) authorises the implementation and the effectivity date. Each gate has reviewers, sign-offs, and an audit trail. Without governed change, every other downstream system — manufacturing, service, regulatory — is operating on assumptions.

Example (the pump): A supplier discontinues the bearing on the pump's drive shaft. ECR opens with the problem. Engineering finds a drop-in substitute, files an ECN with the redlined drawing and a test plan. After verification, the ECO is released with effectivity "serial 14501 onwards" — units already in the field are not affected; units in mid-build at that serial are. Example (medical device): A change to a pacemaker's firmware requires the same flow but with regulatory review built into the gates. The ECO does not release until the FDA submission package is approved internally.

3. Configuration Management

Configuration management answers the question "which version of the product did we ship to which customer, and what is in it?" It handles variants (different flavors of the same product line), options (customer-selectable features), and effectivity (the date or serial number at which a change applies). For complex products, this is the difference between being able to service what you sold and not.

Example (tractor): A row-crop tractor sold to a US customer has a different transmission, different tires, different emissions software, and different decals than the same model sold into the EU. Configuration management ensures the as-shipped configuration of unit #4823 — including the precise revision of every electronic control module — is recoverable five years later when a service technician needs to order parts. Example (the pump): The pump is sold in three power ratings, two seal types, and four port orientations. Configuration management ensures only valid combinations can be ordered, and each ordered combination resolves to a specific BOM revision.

4. Document and CAD Management

Underneath PLM sits a PDM layer that manages the CAD files and engineering documents themselves. This includes versioning, check-in and check-out controls (so two engineers don't simultaneously edit the same part), assembly structure consistency, and multi-CAD interoperability — because no real engineering organization runs on a single CAD vendor. PLM extends document management to the wider corpus: requirement specifications, test reports, supplier datasheets, certificates of conformity, service manuals.

Example (the pump): The pump assembly has parts authored in three different CAD systems — the impeller in SolidWorks, the casing in Creo, the motor purchased as a STEP file from the supplier. PLM holds the unified assembly structure, controls who can check out which part, and enforces that the released revision of the assembly references released revisions of every child component. Example (aerospace bracket): A single titanium bracket has its native CAD file, a PDF of the dimensioned drawing, an FEA simulation report, a stress-analysis sign-off memo, a first-article inspection report, and a material certification — all linked to the same part record so that any one of them can be retrieved against the part number twenty years later.

5. Workflow and Lifecycle State

Every controlled object in PLM — a part, a document, a BOM, an ECO — moves through lifecycle states: in work, under review, released, obsolete. Workflow tooling routes each transition to the right approvers, captures the electronic signatures, and writes the audit trail. The two ideas are inseparable: lifecycle state defines where an object is, workflow defines how it moves and who says yes.

Example (the pump): The released revision of the impeller drawing is the only revision manufacturing is allowed to build to. When engineering wants to change it, they create a new revision in in-work state, route it through review, and only when the workflow completes does the new revision become released and the previous revision drop to superseded. Example (pharmaceuticals): The same primitive applied to a drug formulation specification — except the workflow gates include regulatory affairs sign-off, the released-revision rule is enforced by 21 CFR Part 11 electronic-signature requirements, and the audit trail is itself a regulated artifact subject to FDA inspection.

6. Requirements Management

Modern PLM increasingly anchors requirements alongside the parts they govern. A requirement is a statement of what the product must do, with traceability to the design element that satisfies it, the verification activity that proves it, and the change history of how the requirement evolved. The link between a requirement, a part, a test, and the test result is what requirements traceability means in practice — and it is the difference between being able to certify a product and not.

Example (the pump): "The pump shall maintain rated flow at 15% suction-pressure variation" is a requirement. It is satisfied by the impeller geometry and the mechanical-seal design (traceability to parts), verified by a CFD simulation and a wet-test report (traceability to verification), and audited at the ECO gate any time either part is changed. Example (defense vehicle): A single armored vehicle program may carry 30,000 customer requirements, each linked to one or more design elements and one or more verification activities, with every requirement-to-design-to-verification link capable of being printed into a compliance matrix the customer reviews at every program milestone.

Other capabilities — supplier and quality integration, classification and part numbering, regulatory and compliance tracking, sustainability and material declarations, MBSE and systems-engineering integration — are integral to mature PLM deployments without being foundational in the way these six are. They build on top of the BOM, change, configuration, document, workflow, and requirement primitives, not next to them.

Why PLM Matters

PLM exists because every product organization, sooner or later, has to answer questions that span engineering, manufacturing, service, and the regulator — and the answers have to be the same regardless of who is asking. "If we recall units 1000–2000, what's the parts impact?" is a recall-scope question. "What revision of which firmware shipped with this serial number?" is a configuration-traceability question. "Can this product configuration be sold in the EU after the new battery regulation kicks in?" is a regulatory question. "Which design changes between v3 and v4 actually affected the certified envelope?" is a change-impact question. "How much carbon is in this BOM, and which suppliers have submitted material declarations?" is a sustainability question. None of these questions can be answered reliably from a fileserver, a spreadsheet, or an ERP. They can only be answered from a system that knows the truth about the product — what it is made of, how it has changed, which configuration is valid for which market, and what evidence exists that it does what it says it does.

The stakes for getting these answers right have shifted sharply in the last decade. Sustainability regulation is now operational, not aspirational: the EU's Corporate Sustainability Reporting Directive (CSRD) requires audited disclosures grounded in supplier-level material data, and the EU Digital Product Passport — rolling out across batteries, textiles, electronics, and construction products — requires manufacturers to publish a structured, queryable record of every product placed on the EU market. Both demand BOM-grade data discipline; neither is satisfiable from a spreadsheet. The EU AI Act adds a parallel pressure on industrial AI systems: any model trained on product data, or making decisions about products, inherits an obligation to demonstrate the integrity of the data behind it. Industrial AI itself raises the bar a third time — a copilot reasoning about a product is only as reliable as the BOM, change history, and configuration metadata it is given. Garbage in, hallucinations out, and the hallucinations show up in customer-facing recommendations.

Beneath the regulatory and AI pressure sits the older, plainer reason PLM matters: the cost of not having it scales non-linearly with product complexity and organizational size. Brownfield manufacturers without PLM accumulate orphaned spreadsheets that drift from the engineering reality. They ship products against ambiguous BOM versions because two engineers disagree about which revision is current and neither has authority to decide. They carry untraceable changes — a part substituted at a supplier nobody documented — that surface only at warranty claim, recall, or audit. They develop ECO bottlenecks at the engineering-to-manufacturing handoff because every change requires a meeting to reconcile what should already be a query. They lose institutional knowledge every time an engineer leaves, because that engineer's understanding of which drawing is the real one was never written down. Each of these failures is survivable in isolation. The combined drag — on cycle time, on quality, on regulatory posture, on the ability to use the company's own data for AI — is what eventually forces the conversation that brought you to this page.

A Brief History

PLM did not arrive fully formed. It accreted, in four overlapping waves, from a much narrower problem: how to keep two engineers from overwriting each other's drawing files.

CAD changed engineering from drawings on paper to files on disk. Once there were files, somebody had to manage them — version them, control who could edit them, lock them when they were checked out, reassemble them into something that resembled a product. PDM (Product Data Management) was that somebody. Through the late 1980s and 1990s, every major engineering organization that had bought CAD seats was eventually forced to buy or build a PDM layer underneath, because the alternative was chaos — overwrites, missing files, and no way to know which version of which part was the current one. PDM solved a real problem and created a new one: now there was a structured database of parts and assemblies, but it stopped at the engineering door.

The shift from PDM to PLM happened when two parallel things became obvious. First, the structured part-and-assembly data PDM was holding could be extended — to changes, configurations, requirements, supplier records, the full set of artifacts that surround a part across its lifecycle. Second, the discipline of managing all of that was itself a strategic capability, not a CAD-adjacent IT chore. SDRC's Metaphase, born inside the I-DEAS CAD ecosystem in the early 1990s, is the canonical example of the PDM-to-PLM evolution: a tool built to manage CAD files that grew, acquisition by acquisition, into the data backbone of a full lifecycle. SDRC was acquired by EDS, folded into UGS, and became part of what is now Siemens Teamcenter — Metaphase is ancestral DNA in one of today's big-three PLM platforms. The same era saw PTC's Windchill emerge from Pro/INTRALINK, following the same arc from CAD-data manager to lifecycle platform.

The next evolution was from PLM-as-tool to PLM-as-business-platform, and the canonical story there is MatrixOne. Where Metaphase's lineage was CAD-up — a PDM that grew into PLM — MatrixOne's was business-platform-down: a flexible enterprise data model that could be configured to govern engineering processes without being born in an engineering tool. Dassault Systèmes acquired MatrixOne in 2006, rebranded it as ENOVIA Matrix, and used its data architecture as the foundation for what eventually became 3DEXPERIENCE. The acquisition signaled a strategic bet that PLM was no longer a CAD accessory — it was an enterprise platform that needed enterprise-platform plumbing.

By the mid-2010s the PLM category was mature, the big three were entrenched, and the conversation began shifting from "do we have a system of record" to "is our system of record actually connected to anything." That shift gave us the digital thread — the idea that the data PLM holds about a product should remain consistent, queryable, and traceable as it flows downstream into manufacturing, service, and the field, and back upstream as feedback. The digital thread is less a product than an architectural ambition: the same product identity, the same part references, the same configuration logic, surviving every system boundary it crosses. The story is ongoing. Every PLM vendor today claims to enable it; almost no manufacturer has fully achieved it; and the architectural debates about how to actually deliver it — suite-centric versus thread-centric, monolithic versus federated, screen-scraped versus API-governed — are the live edge of the PLM industry as of 2026. For the longer-form treatment, see PDM History 101 — Part 6: Toward PLM and the Digital Thread.

The Big Three (and the Challengers)

Three vendors dominate enterprise PLM, each with a distinct architectural lineage and a distinct industry center of gravity. A credible enterprise alternative — Aras — sits next to them, and a wave of cloud-native and AI-native challengers is attacking specific gaps from below.

Dassault Systèmes — 3DEXPERIENCE / ENOVIA

Dassault's PLM lineage runs through two acquisitions stitched onto a CAD platform born inside French aerospace. The CATIA CAD system, originally built at Dassault Aviation in 1977, anchored the early product strategy; the PLM layer came later, from the 2006 acquisition of MatrixOne (now ENOVIA Matrix) for the enterprise data model and the 2005 acquisition of SmarTeam for the mid-market PDM-to-PLM ramp. The current product is the 3DEXPERIENCE Platform — a unified architecture where ENOVIA (PLM), CATIA (CAD), DELMIA (manufacturing), SIMULIA (simulation), and a long list of "experience apps" all share a common data model and a common UI shell. Architecturally it is the most platform-native of the big three: every module is built on the same backbone, which is also its most controversial property among customers who would rather buy capabilities than buy into a worldview. Dassault is strongest in aerospace, defense, transportation and mobility, and life sciences — industries where the integration of CAD, simulation, and lifecycle data inside a single environment pays off. The distinguishing positioning fact: 3DEXPERIENCE is the only big-three platform that treats PLM as one tenant of a larger business-experience platform, not as the platform itself.

PTC — Windchill (and Arena, and Onshape, and Codebeamer)

PTC's PLM lineage starts with Pro/ENGINEER (1987) — the first parametric, feature-based, history-driven solid modeler — and the Pro/INTRALINK PDM tool that shipped with it. Windchill emerged in the late 1990s as the web-architected successor and grew, organically and via acquisition (Computervision's CADDS, later MKS Integrity for ALM, Arbortext for technical documentation, Codebeamer for application lifecycle, Arena for cloud PLM, Onshape for cloud CAD), into a discrete-manufacturing-focused enterprise stack. The current architecture is multi-product rather than monolithic: Windchill remains the on-premises and private-cloud enterprise PLM, Arena PLM addresses the cloud-native mid-market (acquired 2021), Onshape delivers cloud CAD with a tightly integrated PDM layer, and Codebeamer covers application lifecycle and requirements management — with PTC's "ThingWorx + Vuforia" IoT and AR adjacencies wired in for service and field-data feedback. PTC is strongest in industrial equipment, electronics, medical devices, and the discrete-manufacturing midmarket, with a notable pull in companies that need a working digital thread between PLM, IoT, and service. The distinguishing positioning fact: PTC is the only big-three vendor with both an enterprise PLM (Windchill) and a cloud-native PLM (Arena) in its portfolio, addressing two different buying centers without forcing a single architectural answer.

Siemens Digital Industries Software — Teamcenter

Siemens' PLM lineage is the product of the most aggressive acquisition strategy in the category. The line runs through Unigraphics (1973, originally McDonnell Douglas) and the iMAN PDM that shipped with it, plus SDRC's Metaphase (acquired by EDS, folded into UGS), with Siemens completing the arc in 2007 by acquiring UGS itself. Teamcenter is the consolidated descendant of all of those threads. Architecturally Teamcenter is the most modular of the big three: a deep, configurable foundation that customers can deploy as a tightly-scoped PDM, as a full enterprise PLM, or as the spine of an integrated industrial-software stack alongside NX (CAD), Simcenter (simulation), Tecnomatix (manufacturing planning), Opcenter (MES), and Mendix (low-code). It is also the most enterprise-IT-flexible: on-premises, private cloud, or Siemens' own Xcelerator-as-a-Service SaaS. Siemens is strongest in automotive, heavy machinery, industrial equipment, electronics, and increasingly the broader Industry 4.0 stack where the PLM-to-MES-to-IoT thread is the actual deliverable. The distinguishing positioning fact: Teamcenter is the only big-three platform with a credible end-to-end story across PLM, MES, IoT, and low-code — the result of a parent company that owns industrial automation hardware as well as software.

Aras — Innovator

Aras Innovator is the credible enterprise alternative to the big three, and its positioning is deliberate. Founded in 2000 around a low-code, model-based PLM platform, Aras opted for a subscription model that includes the source code and the configuration framework — customers extend the platform through a documented data model rather than negotiate change requests with the vendor. The architectural distinction is real: Aras is the only enterprise-grade PLM where the customer's deep configuration survives the vendor's upgrades because configuration is data, not code fork. That property has made Aras a frequent choice in two situations: regulated industries where configuration governance is itself a compliance artifact (defense, aerospace, medical devices), and large enterprises that have outgrown the configurability of one of the big three and want the ability to model their own product semantics without negotiating with the platform. Aras is smaller than the big three by revenue and seat count, but its presence in the enterprise shortlist has been consistent for over a decade.

The cloud-native and AI-native wave

A wave of cloud-native and specialist challengers has been attacking the PLM market from below since roughly 2018, addressing two structural gaps: the mid-market that the big three serve at the wrong price and complexity, and the modern category-shaping problems (sustainability, electronics-software-firmware traceability, AI-native workflows) that the suites have been slow to solve natively. Arena (now part of PTC) pioneered cloud PLM for high-mix electronics and medical-device manufacturers and remains the canonical cloud-native PLM in that segment. Propel built PLM directly on the Salesforce platform, betting that the seam between PLM, CRM, and quality is where the modern buyer wants the integration to already exist. OpenBOM went the other direction — a lightweight, browser-and-spreadsheet-native BOM and inventory tool that meets engineering teams where their data already lives. Duro focuses on hardware startups and contract-manufacturing-heavy electronics teams that need PLM discipline without a six-month implementation. Aletiq (France) and a growing European cohort target small-to-mid-size manufacturers with cloud-first deployments and a regulatory-sensitive design tilt. Makersite sits adjacent to PLM rather than replacing it — a sustainability and supply-chain intelligence platform that ingests BOMs from any PLM and computes per-part carbon, regulatory, and supply-risk profiles, addressing a problem that is operational under CSRD and the Digital Product Passport but not natively solved inside any of the big three. The deeper market view, including the AI-native entrants, is in The New Generation: 30+ Startups Proving PLM Disruption Is Real.

Where PLM Is Going

PLM is in the middle of an architectural shift, and the shift is not optional. The suite-centric model — one vendor's platform owning the BOM, the change process, the configuration logic, the workflow engine, the document store, and the UI shell, all glued together by a shared data model and a shared license agreement — was the right answer for the era when integration was the hardest problem in enterprise software. That era is ending. The hardest problem now is not integration, it is governed access — the ability to expose the right slice of product data to the right system, the right tool, and the right agent, with the right permissions and the right audit trail, at the speed of a query rather than the speed of a quarterly integration project. Suite-centric PLM solves the first problem and is structurally bad at the second. Thread-centric PLM is what comes next: a narrower PLM Core that owns the part identity, the BOM, the change history, the configuration logic, and the lifecycle state — and exposes all of it through governed contracts to a federation of best-of-breed tools (simulation, manufacturing, sustainability, requirements, AI copilots) that attach to the thread rather than sit inside the suite. The full architectural argument is in From Suite-Centric to Thread-Centric PLM; the short version is that the suite was an answer to a 1990s integration problem, and modern manufacturers need an architecture answering a 2026 access problem.

The technical primitive that makes thread-centric PLM operationally credible — rather than aspirational — is the Model Context Protocol (MCP). MCP is a recently-standardized contract for how an AI agent (or any external system) requests structured data and tool invocations from a host system, and how the host returns governed, schema-enforced responses with explicit permissions and audit metadata. For PLM, MCP matters because it solves a problem the industry has been working around for thirty years: how to let downstream systems and AI agents read the BOM, the change history, and the configuration logic without screen-scraping a UI, without taking a nightly database extract, and without hand-crafting a brittle point-to-point integration for every consumer. An MCP-anchored PLM exposes the BOM as a governed contract: the schema is explicit, the permissions are enforced at the edge, every read and every write is audited, and the consumer — whether it is a sustainability platform computing per-part carbon, an MES pulling work-instruction effectivity, or an AI copilot answering a service-engineer's question — interacts with a published interface rather than reverse-engineered access. This is the difference between a digital thread that is decorative and a digital thread that is operational, and it is the architectural prerequisite for everything in the next paragraph.

Agentic AI is the operational shift that makes the thread-centric architecture urgent rather than merely sound. The first-order change is at the workflow surface: today, engineers click through ECO workflows, navigate Windchill or Teamcenter or 3DEXPERIENCE screens, and key in change reasons, effectivity dates, and approval routings by hand; tomorrow, agents draft change requests from problem reports, route them through governed gates, attach the impact analysis they ran themselves, and execute the implementation against the system of record — with humans reviewing the gates that matter and approving in bulk where they don't. PLM's job in that world becomes governance, not data entry: the platform's value is the integrity of the rules the agent must operate against — what configuration is valid, who can approve which gate, which effectivity logic applies — not the screens the human used to use. The second-order change is darker and matters more for the system architect. Agents create a new category of failure that did not exist in the screen-and-spreadsheet era. If a copilot hallucinates a BOM line item, misreads an effectivity, or routes an ECO against a stale configuration, the consequences compound — silently, at machine speed, across thousands of touchpoints. The old failure mode was a human staring at a wrong screen; the new failure mode is an agent confidently writing wrong data into the system of record while every other agent downstream reads it as truth. That is not a failure mode the industry has tooling for yet, and it is exactly the failure mode that bad PLM data, badly governed access contracts, and badly architected suites will manufacture at scale. The longer treatment is in The Agentic AI Revolution in PLM; the conclusion for this post is simpler. The bar on PLM data discipline is rising, the architecture that meets the new bar is thread-centric, and the manufacturers who treat the next few years as a routine modernization rather than an architectural reset will spend the back half of the decade explaining to their regulators, their customers, and their own engineers why their AI told them something that was not true.

Where to Go Next

If you came here looking for one thing in particular:

- A specific term — PLM Glossary

- The history of how we got here — PLM History 101 series

- The vendors — Vendor PLM Histories

- The future architecture — Thread-Centric PLM

- The AI angle — Agentic AI in PLM (6-part series)

- The startups — PLM Startups Landscape

Want to listen instead of read? 56 DemystifyingPLM articles are available as audio.

Browse audio →Looking up PLM terminology? Browse the canonical reference.

PLM Glossary →Cite this article

Finocchiaro, Michael. “What is PLM? A Plain-English Definition for 2026.” DemystifyingPLM, May 2, 2026, https://www.demystifyingplm.com/what-is-plm

PLM industry analyst · 35+ years at IBM, HP, PTC, Dassault Systèmes

Firsthand knowledge of the evolution from early 3D modeling kernels to today's cloud-native platforms and agentic AI — the history, strategy, and future of PLM.